|

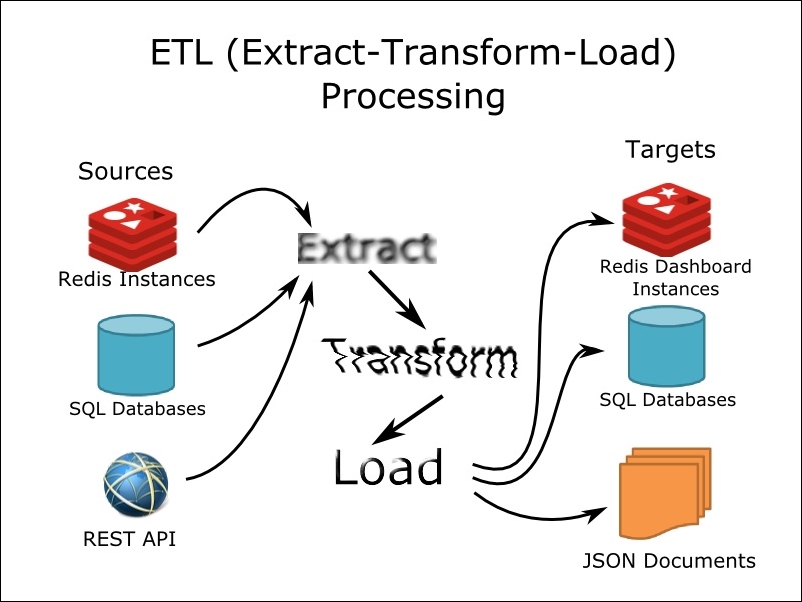

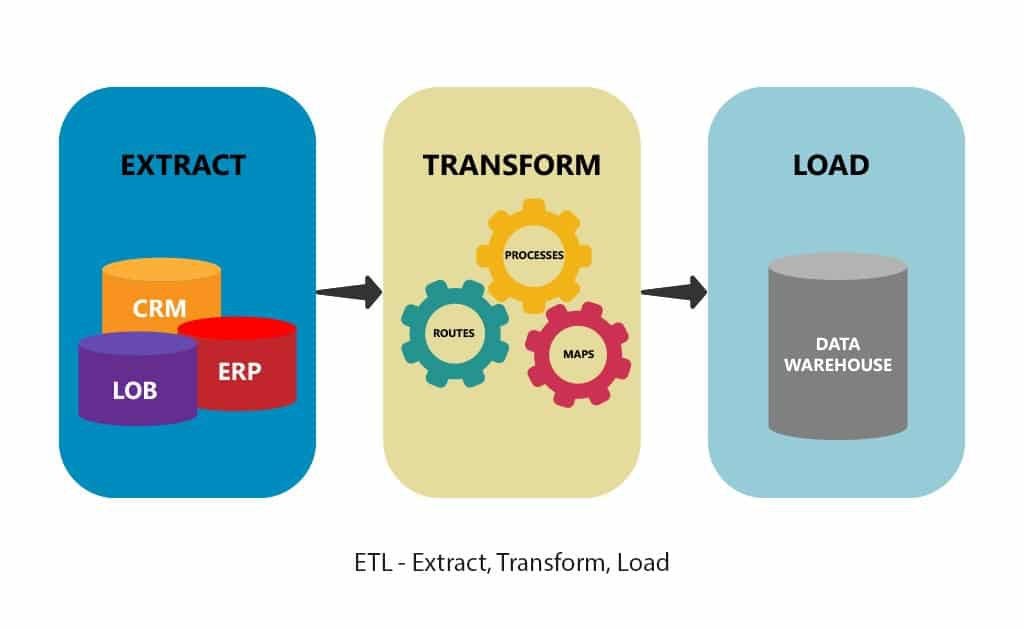

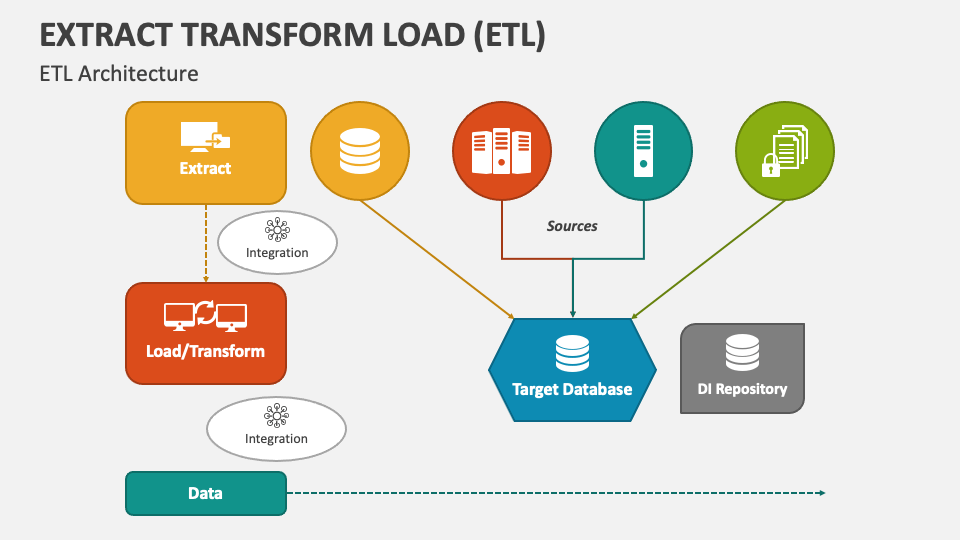

Overall, an ETL pipeline plays an essential role in helping organizations effectively manage and analyze their vast amounts of data by enabling them to extract, transform, and load this information quickly and efficiently. It may also be necessary first to load this data into a temporary staging area before it is finally loaded into the target system in order to allow any validation or error checking prior to final storage. The goal of this stage is to extract only relevant information while discarding unnecessary or redundant data elements and to normalize the data into a format that is optimal for the target application.įinally, during the loading stage, the transformed data is loaded into a staging area or directly into the target system. This step involves extracting the raw data in its original format and structure as it exists in the source system.ĭuring the transformation stage, this raw data is manipulated to transform it into an optimized format that will be easier to work with when loaded into the target database or application. This pipeline consists of three main stages: extraction, transformation, and loading.ĭuring the extraction stage, data is extracted from various sources such as transactional databases, flat files stored on external file systems, web-based applications, or other similar data repositories. What Is an ETL PipelineĪn ETL pipeline is a key component of any data warehousing system. Depending on the complexity and size of your dataset, it may also be necessary to break up this stage of the ETL process into multiple smaller steps to ensure that any errors or issues can be effectively identified and resolved. This is usually accomplished using some form of database management system, such as MySQL or PostgreSQL, which enables you to easily manage and store large amounts of data within a single repository. The final step in an effective ETL process is loading the transformed data into your target system or application. For example, you may need to join multiple tables together or perform calculations on certain values to derive new information. This typically involves manipulating and combining the data in different ways to fit your specific requirements. Once the data has been extracted, the next step of the ETL process involves transforming that data to meet the needs of your target application or system. Once you have connected to your desired sources of data, you can begin extracting the relevant information from them into a staging area for further processing.

This may involve using various tools and technologies to connect to different sources of data, ranging from simple flat files on local servers to more complex databases hosted on remote servers. The first step in an ETL process typically involves identifying and accessing the relevant source data. How Does ETL WorkĪn ETL workflow typically involves three main stages: extracting, transforming, and loading. By extracting and transforming data in a systematic way, organizations are able to make more informed decisions about how to use this information to drive their business forward. Overall, ETL is an important process that can help businesses gain valuable insights from their data.

Some of these might include choosing which data sources to use and how best to access them, selecting which transformation operations will be most effective, and choosing the most appropriate data storage solution for the outputted data. There are many different factors that come into play when performing an ETL process. This typically involves extracting the data from its original source, manipulating it through various transformations or operations, and then loading it into another system where it can be analyzed and used for decision-making. What Is the Purpose of ETLĪt the core of ETL is the idea of moving data from one place to another in order to make it more usable. What Does ETL Stand ForĮTL stands for extract, transform, and load which is a process used in data analysis to transform raw data into clean, usable information. It involves three distinct phases: extracting data from the source system where it is stored, transforming that data to meet the needs of the target system or application, and then loading the transformed data into that system or application. Extract, transform, and load, or ETL, is a process used to extract data from various sources and transform it into a format that can be loaded into a database or other system for analysis.ĮTL is a key component of many data processing pipelines.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed